Blueprint Resource Library

Kickstart your digital transformation with our most helpful RPA resources which includes infographics, videos, and more!

Filter Resources

Featured

Process Governance & Compliance

Most Recent

Smarter, Faster, Riskier? Rethinking Automation in the Age of Agentic AI

Most Recent

Datasheets

Datasheets

Read Now

Technical Overview: Blueprint RPA Migration Solution

Datasheets

Read Now

Blueprint + Blue Prism Integration Datasheet

Datasheets

Read Now

RPA Platform Migration Datasheet

Datasheets

Read Now

Blueprint Platform Datasheet

Datasheets

Read Now

Replatform from Automation Anywhere to Microsoft Power Automate

Datasheets

Read Now

Replatform from Blue Prism to Microsoft Power Automate

Datasheets

Read Now

Blueprint Process Analysis: Understand your Processes, Improve your Business

Datasheets

Read Now

Blueprint RPA Analytics

Datasheets

Read Now

Value Map Assessment ROI Brochure

Datasheets

Read Now

Automation Re-platforming ROI Brochure

Datasheets

Read Now

Migrate to Microsoft Power Automate with Blueprint

Datasheets

Read Now

Blueprint Assess Datasheet

Datasheets

Read Now

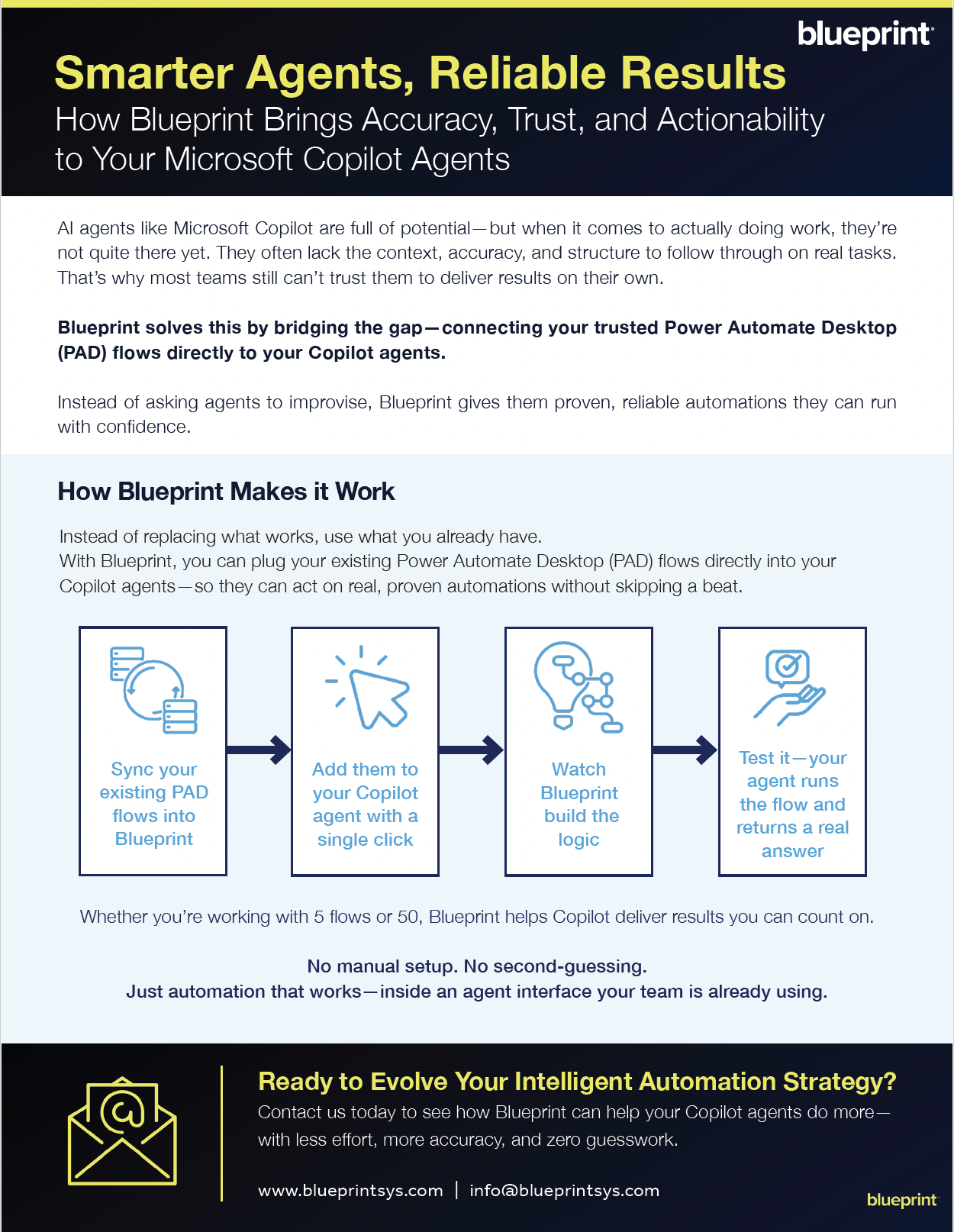

Smarter Agents, Reliable Results: How Blueprint Brings Accuracy, Trust, and Actionability to Your Microsoft Copilot Agents

Infographics

Infographics

Read Now

RPA Migrations in 2023 Research Report - Top 5 Stats

Infographics

Read Now

RPA Glossary

Infographics

Read Now

6 Considerations for When to Use RPA in Finance and Accounting

Infographics

Read Now

Benefits of Automation Re-Platforming with Blueprint

Infographics

Read Now

How much does Robotic Process Automation (RPA) Really Cost?

Infographics

Read Now

10 Metrics You Should be Tracking to Drive RPA Success

Infographics

Read Now

6 Easy Steps for Implementing Enterprise Business Process Analysis (EBPA)

Infographics

Read Now

Five Business Process Analysis (BPA) Techniques You Should Know

Infographics

Read Now

Nine Principles of Continuous Process Improvement

Infographics

Read Now

10 Reasons Microsoft Power Automate is the Right Move for You

Infographics

Read Now

4 Ways Microsoft Power Automate Solves 4 Automation Challenges

Product Demos

Product Demos

Watch Now

RPA Migration - Automation Anywhere to Power Automate Product Demo

Product Demos

Watch Now

RPA Platform Migration: How Blueprint Makes RPA Migration Possible

Product Demos

Watch Now

How to Analyze Business Processes, Identify Inefficiencies, and Drive Continuous Improvement

Product Demos

Watch Now

RPA Migration - Blue Prism to Power Automate Product Demo

Product Demos

Watch Now

RPA Migration - Automation Anywhere to Power Automate Product Demo

Product Demos

Watch Now

RPA Migration - UiPath to Power Automate Product Demo

Product Demos

Watch Now

Blueprint's RPA Analytics

Videos

Videos

Watch Now

Scale RPA Enterprise-Wide with Digital Blueprints - Explainer Video

Videos

Watch Now

Blueprint for Enterprise Process Automation - Explainer Video

Videos

Watch Now

Accelerate Automation Replatforming with Blueprint - Explainer Video

Videos

Watch Now

Rapid Transformation: Migrating to Power Automate with Blueprint

Whitepapers/eBooks

Whitepapers/eBooks

Read Now

The Business Case for Migrating Your RPA Estate to Microsoft Power Automate

Whitepapers/eBooks

Read Now

RPA Migration in 2023 - Research Report

Whitepapers/eBooks

Read Now

RPA Migration Best Practices

Whitepapers/eBooks

Read Now

The State of RPA in 2023 - Research Report

Whitepapers/eBooks

Read Now

2024 RPA Predictions

Whitepapers/eBooks

Read Now

AI (Artificial Intelligence) in RPA

Whitepapers/eBooks

Read Now

Best Practices for Small RPA Migrations

Whitepapers/eBooks

Read Now

RPA Migration Checklist

Whitepapers/eBooks

Read Now

7 Ways to Optimize RPA Governance and Maximize ROI

Whitepapers/eBooks

Read Now

Understanding RPA's Total Cost of Ownership (TCO) and How to Lower It

Whitepapers/eBooks

Read Now

RPA Process Assessment and Candidate Checklist

Whitepapers/eBooks

Read Now

How to Measure a Successful RPA Platform Migration

Whitepapers/eBooks

Read Now

Embracing Process Excellence - Whitepaper

Whitepapers/eBooks

Read Now

Process Modernization in 2022 - Research Report

Whitepapers/eBooks

Read Now

Process Improvement Checklist

Whitepapers/eBooks

Read Now

Cloud Automation vs On-Premises: Which Should you Choose?

Whitepapers/eBooks

Read Now

Automation Anywhere and Blueprint

Whitepapers/eBooks

Read Now

Digital Twins Whitepaper

Whitepapers/eBooks

Read Now

Intelligent Automation & RPA: 2022 Trends and Predictions for 2023

Whitepapers/eBooks

Read Now

A Guide to RPA Migration

Whitepapers/eBooks

Read Now

AI in RPA

Whitepapers/eBooks

Read Now

Automation Sprawl

Whitepapers/eBooks

Read Now

Automation Security

Whitepapers/eBooks

Read Now

Future-Proofing Automation Compliance

Templates

Templates

Read Now

Improve Your RPA Center of Excellence (CoE) - Cheat Sheet

Templates

Read Now

A Template to Setting Up an RPA Center of Excellence (CoE)

Templates

Read Now

To-do List for Scaling Robotic Process Automation

Templates

Read Now

Process Decomposition Template

Most Recent

Most Recent

Read Now

Automation Audit Checklist

Learning Centers

Learning Centers

Read Now

How to Implement RPA Enterprise-Wide

Learning Centers

Read Now

Best Practices for Achieving RPA at Scale

Learning Centers

Read Now

What is Robotic Process Automation?

Learning Centers

Read Now

Automation Re-Platforming: Frequently Asked Questions

On Demand Webinars

On Demand Webinars

Watch Now

Who Moved My Bots?! Expert insights on migrating automation programs

On Demand Webinars

Watch Now

The RPA Metrics You Should be Tracking to Drive ROI

On Demand Webinars

Watch Now

Rumble in the Automation Jungle: Manual Migration vs Blueprint's Migration Assistant - What's Better, Faster, & Cheaper?

On Demand Webinars

Watch Now

On-Demand Webinar: Breaking the Cycle of Process Debt

On Demand Webinars

Watch Now

Cut Costs, Not Corners: Mastering a LoCode Digital Strategy and RPA Migration

On Demand Webinars

Watch Now

WEBINAR: Mastering RPA Migration: Strategies for Seamless Transitions and Cost Efficiency

Upcoming Events

Upcoming Events

Watch On-Demand

Fix the Faucet: Stop Automation Cost Leaks Before They Drain Your ROI

Most Recent

Most Recent

Watch On-Demand

Smarter, Faster, Riskier? Rethinking Automation in the Age of Agentic AI

No Items Found